How to Automate MongoDB Database Backups in Linux

We have setup of One Primary with Multiple Secondary Even if we configured highly available setup and backups , native backup are so specia...

Read MoreWe have setup of One Primary with Multiple Secondary Even if we configured highly available setup and backups , native backup are so specia...

Read MoreIts easy to recover MongoDB Backup using Percona Backup for MongoDB https://medium.com/@datablogs/restore-and-point-in-time-restore-with-per...

Read More

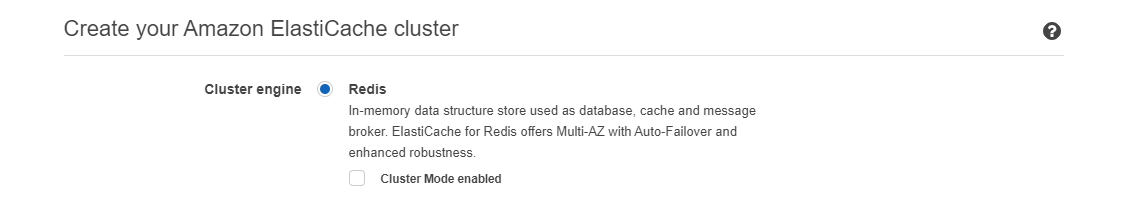

Comparing Redis , AWS Elasticache giving multiple options to cache the data in cloud . Its enhanced with two ways of Access control opt...

Read More

Sharing simple steps to configure Cassandra cluster in few minutes , Prerequisite : Install Java ( Jre ) from oracle site and verif...

Read More

Sounds interesting from Percona Backup tool for MongoDB !!! I just wants to try and explore the tool with docker on today !!! Docker is firs...

Read More

MongoDB supports horizontal scaling of the data with the help of the shared key. Shared key selection should be good and poor shared key spl...

Read More